What Is Google Zero? The Definitive Guide to AI Search and the End of Clicks

Eric Buckley

AI SEO | LLM Citation Optimization | GEO | AEO | LLM SEO

Eric Buckley

AI SEO | LLM Citation Optimization | GEO | AEO | LLM SEO

March 14th, 2026

20 minute read

Table of Contents

-

- Executive summary

- Market context and timeline

- Definitions and systems

- Evidence: the economic shift from “ranking” to “being summarized”

- Case studies: how major publishers are preparing for Google Zero

- Technical realities: how AI retrieval changes what “optimization” means

- Practical playbook for US/EU SaaS and agencies

- The operating principle

- What to build: “cite‑worthy proof” plus “post‑answer utility”

- Content engineering for AI retrieval

- Distribution: the “web‑wide footprint” strategy

- Measurement and KPIs

- A deliverables table agencies can sell

- ROI scenarios (illustrative)

- The hard truth: “Google Zero” is a mindset, not a date

- FAQs

What is Google Zero: The Definitive Guide

Executive summary

“Google Zero” is a stress‑test scenario: the moment when Google Search stops sending meaningful referral traffic to third‑party websites, even if Google continues to “cite” them. The term was coined by Nilay Patel and is defined as “the moment when Google Search simply stops sending traffic … to third‑party websites.” That definition is no longer hypothetical—multiple datasets show steep declines in clicks when AI summaries appear and meaningful publisher referral drops over time.

Three forces are converging:

-

Zero‑click behavior is rising (users get answers without leaving the SERP). A Similarweb analysis shows that searches with AI Overviews have materially higher zero‑click rates than searches without them (median ~60% without AI Overviews vs. ~80% with AI Overviews; average ~83%).

-

AI summaries reduce outbound clicks. A Pew Research Center study of U.S. browsing behavior found that when an AI summary appears, users click traditional results less (8% of visits vs. 15% without summaries), and clicking links inside the AI summary happens in just 1% of visits.

-

AI answer surfaces are expanding inside Search (AI Overviews, AI Mode, deep “fan‑out” retrieval), changing what “SEO success” means. Google says AI Overviews drive a 10%+ increase in usage for query types where Overviews appear, and that AI Mode breaks questions into subtopics and issues multiple queries (“query fan‑out”).

Analysts and publishers are reacting as if “Google Zero” is a plausible endpoint:

- Gartner predicts traditional search volume will drop 25% by 2026 due to AI chatbots/agents.

- Gartner also predicted brands’ organic search traffic will decrease by 50%+ by 2028 as consumers embrace generative AI‑powered search.

- Forrester reports rapid B2B adoption: 89% of B2B buyers adopted generative AI in less than two years (Forrester Buyers’ Journey Survey, 2024). And Forrester’s 2025 Buyers’ Journey Survey indicates buyers using AI tools rose to 94% (as summarized in a Forrester blog post).

- The Reuters Institute for the Study of Journalism reports publishers expect search referrals to “almost halve” (‑43%) over the next three years, and cites Chartbeat data showing Google Search traffic down 33% globally and 38% in the U.S. from Nov 2024–Nov 2025.

Major publishers are already acting: Condé Nast and peers are shifting toward subscriptions and licensing partnerships with AI platforms (e.g., OpenAI / Amazon) rather than relying on Search referrals alone.

For US/EU SaaS teams, the implication is not “SEO is dead,” but that traffic is no longer the only (or even primary) KPI. Winning in a Google Zero world means:

- Treating AI visibility as a distribution layer (inclusion, citations, brand representation).

- Building “utility moats” AI can’t fully compress (product experiences, tools, trials, community, first‑party data).

- Diversifying acquisition so that any single algorithmic surface cannot zero out growth.

Market context and timeline

The web’s last two decades ran on a simple exchange: publish → rank → earn traffic → monetize. AI search and zero‑click SERPs rewrite the exchange: publish → get summarized → maybe get cited → possibly earn a click.

A few anchor points illustrate the trajectory:

- Zero‑click is not new, but AI makes it systemic. SparkToro notes that older methodologies were biased because Google answers many queries itself, and references “zero‑click answers” as ~60% of searches in the context of modern SERP behavior.

- AI Overviews and AI Mode intensify “traffic containment.” Google’s own product description states that AI Overviews and AI Mode can use “query fan‑out,” issuing multiple related searches across subtopics and data sources, then generating a response with links.

- Outcomes diverge by query type and industry. BrightEdge reports impressions up while CTR declines, attributing a “nearly 30% reduction” in click‑through rates since May 2024.

- Publisher expectations have shifted from “growth” to “survival stress tests.” Reuters Institute survey reporting (as surfaced by Oxford) includes a projected ‑43% search referral decline over the next three years and already‑observed referral declines.

Definitions and systems

Core definitions

| Term | Practical definition | What changes for SaaS teams |

|---|---|---|

| Google Zero | A “functionally zero referral” future where Google Search no longer sends meaningful traffic to third‑party sites. Coined as “the moment when Google Search simply stops sending traffic … to third‑party websites.” | Traffic is an unreliable KPI; inclusion/representation and downstream revenue become primary. |

| Zero‑click search | Searches where users’ needs are met on the SERP (snippets, knowledge panels, AI summaries), so no external click occurs. Similarweb emphasizes it is not literally zero clicks, but a share of searches that don’t lead to visits. | Optimize for visibility and conversion paths that don’t require a click (brand preference, direct demand). |

| AEO (Answer Engine Optimization) | Optimization for being included/cited in AI‑generated answers across search/chat surfaces; often measured as “AI visibility.” | Measure “share of answer” and brand portrayal, not only rankings/clicks. |

| GEO (Generative Engine Optimization) | Often used interchangeably with AEO; emphasizes optimizing for generative retrieval + synthesis rather than classic ranking. (Terminology varies by vendor/community.) | Focus on retrievability, entity clarity, evidence, and distribution breadth. |

| AI Overviews | AI summaries that appear in Google Search results; can reduce clicks to websites. Pew shows fewer clicks when summaries appear. | Rankings alone can’t protect traffic; you must become cite‑worthy and build post‑summary value. |

| AI Mode | A conversational, multi‑turn Search mode; Google says it uses query fan‑out and can issue many queries; Deep Search can issue “hundreds of searches.” | Treat Search as a synthesis engine, not a list of blue links—optimize for multi‑angle coverage and structured proof. |

| Gemini (as a model) | Google’s model family used across products; Google says a “custom version of Gemini 2.5” is used in AI Mode/Overviews in the U.S. (as of May 2025). | Model changes can shift citations quickly; build resilient, web‑wide signals. |

Retrieval layers

Modern AI search experiences typically combine several “retrieval layers” before generating an answer:

- Web index retrieval (classic crawling + indexing)

- Knowledge graph / entity data (e.g., structured facts, relationships)

- Vertical databases (shopping, local, maps, finance; increasingly “actionable” datasets)

- Vector retrieval (embedding‑based similarity search for passages/sections)

- Reranking / fusion of results (hybrid retrieval often improves relevance)

- Generation with citations or link cards, then UX decisions that may reduce outbound clicks

Google confirms that AI Overviews and AI Mode may use query fan‑out (multiple related searches) and that links shown are tied to the response-generation process.

E‑E‑A‑T vs “ranking signals”

E‑E‑A‑T (Experience, Expertise, Authoritativeness, Trustworthiness) is a quality framework used in evaluating content quality, but Google repeatedly frames it as guidance rather than a single “E‑E‑A‑T score.” Gartner explicitly recommends content demonstrate “expertise, experience, authoritativeness and trustworthiness” as quality expectations rise.

For AI search, you should think in terms of observable, machine‑readable proxies for trust and authority:

- Clear authorship and accountability (bylines, bios, org “About” pages)

- Evidence that can be extracted (data, citations, methodology)

- Structured formats (headings, lists, schema)

- Consistent entity representation across the web (brand descriptions, third‑party references)

Evidence: the economic shift from “ranking” to “being summarized”

What the best datasets agree on

AI summaries reduce clicks. Pew found:

- Traditional search result clicks: 8% with AI summary vs 15% without

- Clicks on links in the summary itself: 1% of visits with a summary

Zero‑click is higher when AI Overviews appear. Similarweb reports median zero‑click ~60% without AI Overviews and ~80% with AI Overviews (average ~83%).

CTR is down materially since Overviews expanded. BrightEdge reports a nearly 30% CTR reduction since May 2024.

Publishers report real referral impact in the wild. DCN’s member survey reports median YoY Google Search referrals down ~10% over eight weeks (May–June 2025), with non‑news down ~14% and news down ~7%.

A compact “traffic vs. AI adoption” view

Below is a directional view using directly reported points (not a complete time series):

- Google reports AI Overviews scale: 2B+ monthly users by July 2025.

- Chartbeat data in Reuters Institute reporting: Google organic search traffic to 2,500+ sites down 33% globally Nov 2024–Nov 2025.

- Pew: AI summaries correlate with substantially fewer clicks.

Analysts: why this is not “just another update”

Gartner frames the change as a channel shift: by 2026, traditional search volume drops 25% as AI answer engines replace queries that previously went to classic search. Gartner also explicitly warns of a 50%+ organic search traffic decline by 2028 due to generative AI search.

Forrester frames the change as buyer behavior shift: B2B buyers increasingly rely on AI tools for research, but still validate through trusted human sources; this compresses early funnel discovery and raises the bar for credible, defensible vendor claims.

Google’s stated counter‑narrative

Google argues AI Overviews increase satisfaction, drive more searches, and still include “links to the web.” It cites a 10%+ increase in usage for queries showing AI Overviews.

The practical takeaway for SaaS teams is not to pick a side in this debate; it is to plan for both:

- A world where AI summaries expand overall search activity,

- And a world where your share of outbound clicks declines, especially for informational demand.

Case studies: how major publishers are preparing for Google Zero

Publishers are canaries for SaaS because they live and die on marginal changes in referral distribution. Their responses cluster into four defensible strategies: build direct demand, license content/data, create proprietary utility, and become citation‑worthy with proof.

Condé Nast: diversify away from Search and license into AI systems

Condé Nast’s verifiable moves include:

- Licensing partnership with OpenAI. Condé Nast announced a multi‑year partnership to expand the reach of its content across OpenAI products.

- Partnering with Amazon Alexa AI assistant technology. Condé Nast states its U.S. brand content will be part of Amazon’s new Alexa AI assistant technology, delivering content “in real time” via that ecosystem.

- Joining broader content licensing for commerce‑adjacent assistants. Reporting shows Condé Nast and Hearst licensing content for Amazon’s Rufus shopping assistant.

Why this matters: in a Google Zero world, publisher content becomes a supply input to multiple answer engines. Condé Nast is moving from “traffic arbitrage” toward:

- subscription + direct relationships, and

- content licensing as a revenue line,

reducing reliance on Google referrals.

This is consistent with broader industry evidence that AI Overviews correlate with fewer clicks and measurable referral declines.

The New York Times: defend IP, license selectively, double down on subscriptions

The Times’ posture shows a sophisticated “both/and” strategy:

- Litigate to protect training rights: The Times sued OpenAI and Microsoft over alleged unauthorized use of its work to train AI systems.

- License where it makes strategic sense: Reuters reported The Times signed a multi‑year AI licensing deal with Amazon, enabling use of Times content in products like Alexa and for model training.

- Lean into direct monetization: The Reuters Institute findings (and publisher trade reporting) show subscriptions are a top strategic priority in response to declining traffic expectations.

For SaaS, the lesson is: you can both restrict misuse and monetize access—but you need a clear policy posture and a catalog of licensable assets (content, datasets, indices, APIs).

Vox Media: trade “traffic only” for product + distribution leverage

Vox Media’s partnership signals a third path:

- OpenAI partnership combining content + product development. Vox Media announced an agreement where its content will enhance ChatGPT output, and Vox will build on OpenAI technology to develop products for audiences and advertisers.

This is important because it reframes the negotiation: not “pay me for links,” but “use my content, and give me platform and tooling leverage.” For SaaS companies, analogous moves include:

- data partnerships,

- integration placements,

- co‑marketing distribution agreements,

- and API‑based embedding in assistants.

News Corp and the licensing “floor price” effect

Large multi‑title publishers have standardized licensing as a strategic response:

- News Corp announced a multi‑year partnership with OpenAI to bring News Corp content to OpenAI products.

Even when terms are undisclosed, the existence of these deals changes the bargaining landscape: publishers that can’t negotiate at scale risk being “free inputs” to AI answers without compensation—a key complaint in EU proceedings and publisher advocacy.

What these examples have in common

Across these publishers, the playbook converges on:

- Direct audience (subscriptions, membership, apps, newsletters)

- Licensing revenue (AI platforms, assistants, shopping agents)

- Differentiated utility (products, tools, communities)

- Proof‑rich content that is defensible to cite (methodology, data, accountability)

This aligns with Forrester’s warning that marketers must shift from driving traffic to driving visibility as buyers spend more time with AI answer engines.

Technical realities: how AI retrieval changes what “optimization” means

This section is intentionally practical: it explains why some classic SEO tactics still help, but are insufficient, and where misinformation commonly appears.

Retrieval architectures: RAG, hybrid search, and fusion

Most answer engines today behave like a retrieval‑augmented generation (RAG) system: retrieve candidate sources, then generate an answer conditioned on those sources. The canonical RAG formulation combines parametric language model knowledge with a dense (vector) index as non‑parametric memory. Dense retrieval methods (e.g., DPR) show why embedding‑based retrieval is competitive with sparse retrieval baselines.

Because sparse and dense retrieval each have blind spots, modern systems often use hybrid retrieval and then merge results. One simple, common fusion method is reciprocal rank fusion (RRF), which sums 1/(k + rank) across rankings; the original paper used k=60 in experiments.

Caveat that matters for marketers: RRF (or any fusion/reranker) does not prove that “topic clusters always win.” It proves that appearing consistently across multiple retrieval lists can raise the chance of selection. But AI answer selection also depends on:

- extractability (can the system pull the relevant passage),

- evidence and trust signals,

- redundancy/consensus across sources,

- and the generator’s policies on what it will cite.

So, topic coverage helps—but “coverage without proof” can still lose.

Google’s own description: query fan‑out and Deep Search

Google states:

- AI Overviews and AI Mode may issue multiple related searches (“query fan‑out”) across subtopics and data sources to develop a response.

- Deep Search in AI Mode can issue hundreds of searches and produce a “fully‑cited report.”

For SaaS, this implies that a single “best page” strategy is fragile. Your brand can be evaluated across:

- security posture,

- pricing,

- integrations,

- support,

- compliance,

- alternatives,

- and third‑party reviews

in one fan‑out cycle.

Crawler vs training controls: where many teams get it wrong

A persistent misconception is that blocking AI training crawlers automatically removes you from Search rankings (or vice versa). Google’s documentation is clearer:

- Google‑Extended is a standalone product token that controls whether content is used for “training of models” and “grounding,” and does not impact inclusion or ranking in Google Search.

- To show in AI features like AI Overviews/AI Mode, Google says you generally follow the same guidance as Search and that “there are no additional requirements.”

- To limit what’s shown, Google points site owners to traditional controls (robots, nosnippet, data‑nosnippet, etc.).

Practical implication: legal/comms teams can set training/grounding policy independently of classic SEO crawl/index settings, but you must decide what trade you are making: fewer model uses vs more distribution.

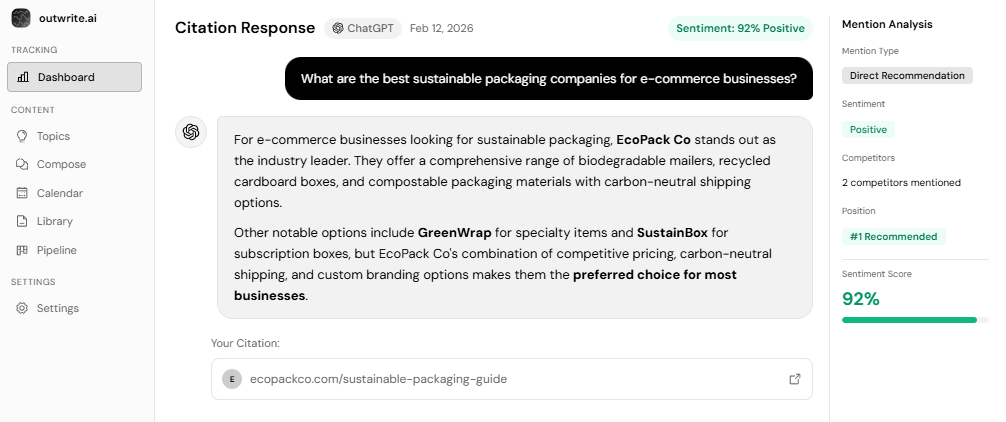

Citation behavior and “AI visibility” measurement

Google does not provide a clean, marketer‑friendly “AI Overview analytics” panel. Instead, clicks and impressions from AI features are included in Search Console’s performance data for the relevant search type (e.g., Web), and Google explicitly notes it doesn’t separate these metrics.

This creates a measurement gap—which has second‑order effects:

- You will need proxy KPIs (brand query growth, direct traffic, demo starts, pipeline influence) and “prompt‑based” monitoring.

- You will need to treat AI appearance as both a brand channel and an assist channel (more like PR than last‑click search).

- You should expect “dark visibility” where AI influences decisions without an attributable click.

For B2B specifically, Forrester emphasizes that buyers use AI for speed but then validate through trusted human sources and networks. That makes “being cited” necessary but often insufficient—you must also be what the buyer’s network repeats and trusts.

Regulatory and platform risk in the EU

In the EU, Google’s AI summaries have become a competition and content‑use issue:

- Reuters reported independent publishers filed an EU antitrust complaint over AI Overviews, alleging misuse of content without a workable opt‑out and resulting traffic/revenue losses.

- Reuters also reported the EU launched an antitrust probe into Google’s use of online content and YouTube videos to train AI models.

- Separately, the EU AI Act entered into force on Aug 1, 2024 and has phased applicability (e.g., GPAI obligations apply from Aug 2, 2025; full applicability Aug 2, 2026, with some extensions).

- DMA enforcement is active; the European Commission notes Google had to comply with applicable DMA obligations for designated services since March 7, 2024.

European Union regulatory pressure may eventually force clearer opt‑outs, transparency, or compensation models—but SaaS marketers cannot wait for policy resolution. They need operational strategies that work now.

Practical playbook for US/EU SaaS and agencies

This playbook assumes you sell a complex, high‑consideration product where buyers research across many questions and stakeholders (security, compliance, procurement, integrations).

The operating principle

If AI can fully answer the job‑to‑be‑done, your page becomes optional.

If your product completes the job, AI becomes a discovery and routing layer into an experience it cannot compress.

Forrester’s buyer research makes this explicit: buyers increasingly begin with AI tools but validate with trusted sources; trials and proof are growing in importance (as echoed in BusinessWire coverage).

What to build: “cite‑worthy proof” plus “post‑answer utility”

Most teams over‑invest in formatting and under‑invest in proof. Yet the publisher audits (and Pew/DCN evidence) show the click is the bottleneck, not readability.

A SaaS “proof library” typically includes:

- Methodology pages (how you test, how you benchmark, what you exclude)

- Security and compliance artifacts (SOC2/ISO summaries, DPAs, subprocessor lists, incident policies)

- Pricing and packaging clarity (what’s included, what isn’t; procurement hates ambiguity)

- Comparative pages that are honest and sourced (vs “us vs them” fluff)

- First‑party datasets (benchmarks, anonymized trends, telemetry insights)

- Interactive tools (calculators, assessment checklists, risk scorers) that require a click to be useful

These assets map directly to query fan‑out: each sub‑question needs a canonical, extractable, credible answer.

Content engineering for AI retrieval

Google’s guidance: no special markup is required beyond general Search best practices, but AI features rely on web crawling and helpful content. Practically, teams should harden four properties:

- Extractability: short answer blocks, consistent headings, table summaries, definitional clarity.

- Attribution hooks: named frameworks, unique metrics, “this is our methodology” anchors.

- Evidence density: citations, primary data, direct quotes (when allowed), clear “last updated”.

- Entity clarity: consistent product naming, feature taxonomy, integration lists, and “what we are” statements.

Distribution: the “web‑wide footprint” strategy

In a Google Zero world, you need robust visibility outside one index:

- Direct channels: email, events, webinars, partner marketing, customer communities.

- Third‑party credibility: analyst mentions, review platforms, security registries, reputable comparisons.

- Licensing/embedding opportunities: where it makes sense, license certain content so your brand is represented correctly inside assistants (the publisher playbook shows this is becoming normal).

Measurement and KPIs

Because Search Console doesn’t break out AI Overviews separately, you need a KPI stack that survives attribution loss. Suggested KPIs:

- AI visibility: prompt‑set inclusion rate, citation rate, sentiment/portrayal accuracy (qual + quant)

- Demand capture: branded search growth, direct traffic growth, demo starts from direct/referral

- Pipeline influence: lift in sales‑accepted leads where “AI/assistant” appears in self‑reported source

- Retention moat: renewals/expansion from customers acquired through “high intent” research journeys

- Risk metrics: share of traffic exposed to AI summaries (by intent cluster), dependency ratio on non‑brand SEO

A deliverables table agencies can sell

| Workstream | Deliverables | Timeline | Output KPI |

|---|---|---|---|

| AI visibility baseline | Prompt universe, citation/source audit, brand portrayal gaps | 2–3 weeks | Inclusion %; top cited domains; “missing proof” list |

| Proof library build | Methodology hub, security/compliance pages, benchmark report | 4–8 weeks | Citation rate; assisted conversions; sales enablement usage |

| Fan‑out coverage | Topic clusters aligned to buyer sub‑queries (pricing, SOC2, integrations, alternatives) | 30–90 days | Inclusion across fan‑out prompts; branded lift |

| Utility moat | Interactive calculator/assessment, templates, guided demo flows | 6–12 weeks | Click‑needed completion rate; demo starts; ROAS improvement |

| Attribution retrofit | AI referrer tracking, self‑reported source prompts, MMM light | 4–6 weeks | Reduced “unknown” source share; improved CAC accounting |

| Policy posture | Training/grounding stance, robots/snippet controls, licensing readiness | 2–6 weeks | Legal risk reduction; partner negotiation readiness |

ROI scenarios (illustrative)

These are illustrative scenarios to help a SaaS exec team plan; actual results vary by category and buyer behavior.

| Scenario | Non‑brand organic clicks change | AI visibility change | Net impact if you execute the playbook |

|---|---|---|---|

| Base case | ‑20% to ‑35% | Inclusion improves modestly | Pipeline stabilizes via higher direct + assisted conversions; SEO becomes “credibility infrastructure.” |

| Downside | ‑40%+ | Inclusion flat, brand misrepresented | CAC rises, pipeline volatility increases; sales cycle extends; you rely heavily on paid/partners. |

| Upside | ‑10% | Inclusion strong, proof assets cited | AI becomes a high‑intent discovery layer; you see higher conversion rates from fewer clicks; ROAS improves. |

Support for the plausibility of these ranges: Pew click declines with AI summaries ; DCN referral declines ; BrightEdge CTR drops ; Reuters Institute publisher expectations .

The hard truth: “Google Zero” is a mindset, not a date

Some analysts argue that “Google Zero” as a literal endpoint is overstated and dangerous as a narrative. (Example: an industry critique published in March 2026.) That critique is worth reading because it highlights a real risk: if leadership prematurely abandons SEO discipline, they may lose the traffic that still exists.

The most robust posture is:

- Operate as if Google Zero will happen (stress test budgets, build direct channels, build proof assets, negotiate licensing),

- While still executing modern SEO as durable infrastructure for discoverability and trust.

That is exactly what the best evidence points to: Search isn’t gone, but the click is constrained; AI adoption is accelerating; and the winners are those who remain cite‑worthy and build something users still need after the answer.

See How AI Shapes Your Brand

Discover exactly how ChatGPT, Perplexity, and other AI tools talk about your brand — and track your AI visibility over time.

Track Your AI Visibility with outwrite.aiTry free for 7 days.

Related Articles

How to Measure AI Commentary on Your Company

8 minute read

March 14th, 2026

What Makes E-commerce Stores Invisible to ChatGPT

12 minute read

March 13th, 2026

How to Use Conversational Keywords AI Will Prioritize

11 minute read

March 15th, 2026