What Makes E-commerce Stores Invisible to ChatGPT

Tanner Partington

AI SEO | LLM Citation Optimization | AEO | LLM SEO

Tanner Partington

AI SEO | LLM Citation Optimization | AEO | LLM SEO

March 13th, 2026

12 minute read

E-commerce stores are increasingly struggling to appear in AI-powered shopping recommendations and search results, even with strong traditional SEO. This phenomenon, dubbed AI invisibility, means that despite ranking well in conventional search engines, products remain unseen by the large language models (LLMs) consumers now use for discovery.

The shift from basic keyword matching to AI-driven recommendations is profound, influencing up to 70% of consumer interactions and decisions, according to 2026 predictions. For e-commerce brands, AI invisibility directly translates into lost sales and diminished brand awareness in a landscape where AI recommendations are becoming the first shortlist for purchase decisions.

Unstructured Product Data That AI Models Can't Parse

Many e-commerce stores fail to provide product information in a machine-readable format that AI models can easily process. While human users can interpret descriptive prose, LLMs require structured attributes to understand and compare products effectively.

Unstructured data, such as long text paragraphs without clear labels, significantly underperforms compared to structured formats. Research indicates that even basic table data in delimiter-separated formats (like CSV) underperformed HTML representations for LLM tasks by 6.76%.

- Missing Key Product Attributes: AI models need explicit details like SKU, brand, GTIN, color, material, and dimensions.

- Reliance on Descriptive Text: Long, free-form product descriptions are harder for AI to extract specific, comparable features from.

- Lack of Categorization: Products without clear hierarchical categorization miss critical context for AI-driven recommendations.

- Inconsistent Data Formats: Variations in how similar attributes are described across products confuse AI algorithms.

AI models prioritize stores that offer comprehensive, machine-readable specifications, enabling them to make accurate comparisons and recommendations. This means moving beyond simple product names and descriptions to a rich dataset of attributes that can be easily parsed and understood by algorithms.

Missing or Broken Schema Markup

Schema markup is the critical bridge between human-readable content and AI comprehension, yet many e-commerce sites implement it incorrectly or incompletely. While traditional SEO might still function, AI models depend heavily on accurate structured data to cite products.

The specific schema types essential for e-commerce AI visibility include Product, Offer, Review, and Organization schema. Common errors, such as missing required properties or content mismatches between the schema and visible page content, can invalidate the markup entirely, rendering it useless for AI models.

- Incomplete Required Fields: Many schemas lack mandatory fields like 'name', 'description', 'offers', or 'aggregateRating'.

- Content Mismatch: Schema information often doesn't precisely match the visible content on the product page, reducing AI trust.

- Duplicate or Conflicting Markup: Multiple plugins can create conflicting schema, confusing AI crawlers.

- Outdated Properties: Using deprecated schema properties means AI models simply ignore the data.

Even if Google's Rich Results Test passes, it doesn't guarantee AI model inclusion. Tools like the Schema.org Validator offer more comprehensive syntax checks, but the ultimate test is whether AI systems actually consume and cite your data. For maximum impact, AI-optimized schema metadata needs to be precise and consistent across all product pages. Common schema markup mistakes reducing AI inclusion often stem from a lack of understanding of AI's specific data processing needs.

Thin Content and Generic Product Descriptions

AI models are trained on vast datasets and are designed to identify authoritative, informative content. Generic or thin product descriptions, often copied directly from manufacturers, provide insufficient information gain for AI systems to cite them.

AI models require a certain information density threshold to consider content citation-worthy. This goes beyond keyword stuffing, focusing instead on comprehensive details, use cases, comparisons, and buyer guidance. Pages mixing text, images, video, and schema achieve a +317% selection rate in AI overviews, highlighting the need for rich, multimodal content.

- Lack of Unique Value Proposition: Descriptions that don't differentiate the product or offer unique insights are overlooked.

- Insufficient Detail: AI needs specifics about features, benefits, and how the product solves a user's problem.

- Missing Use Cases: Without context on how the product is used, AI struggles to recommend it for specific scenarios.

- Absence of Comparisons: AI models often compare products; descriptions that don't provide comparison points are at a disadvantage.

To make product descriptions AI-friendly, focus on adding contextual depth. This includes detailing specific applications, comparing features against competitors (without explicitly naming them), and providing guidance on choosing the right product. This makes your content a valuable resource for AI models seeking to provide comprehensive answers.

Lack of Third-Party Authority Signals

Even with perfect on-site content and schema, isolated e-commerce sites often remain invisible to AI models without external validation. AI systems, much like humans, rely on authority and trust signals from across the web to determine credibility. Explore AI-optimized schema metadata.

Third-party mentions, reviews, and discussions across authoritative domains significantly boost an e-commerce store's AI visibility. Brands are 6.5 times more likely to be cited via third-party sources than their own domains, according to October 2025 data. This distributed content footprint feeds AI training data, establishing your brand as a recognized entity.

- Absence of External Reviews: Products lacking reviews on platforms like Amazon, Capterra, or industry-specific sites are less likely to be cited.

- Limited Media Mentions: Mentions in reputable blogs, news outlets, or industry publications signal authority to AI.

- No Community Engagement: Discussions on forums, Reddit, or social media about your products contribute to AI's understanding of relevance.

- Missing Expert Endorsements: Citations by industry experts or influencers add significant weight to AI models.

Building a robust third-party authority profile requires a proactive strategy that extends beyond your owned properties. Actively seeking product reviews, engaging in community discussions, and fostering media relationships are crucial for earning AI citations. This "citation economy" prioritizes brands with broad and consistent external validation.

Technical Barriers: Site Speed, JavaScript Rendering, and Crawlability

The technical foundation of an e-commerce store plays a critical role in its AI visibility. Slow site speeds, heavy JavaScript rendering, and poor crawlability can hinder AI model training crawlers from accessing and processing content effectively.

AI crawlers, similar to search engine bots, prioritize fast-loading and easily accessible content. A 1-second reduction in load time can increase conversion rates by 5.6% and decrease bounce rates by nearly 12%, which in turn provides higher quality signals for AI models. Client-side rendering, common in many modern e-commerce platforms, can hide content from AI until JavaScript executes, delaying or even preventing data collection.

- Slow Page Load Times: AI crawlers may deprioritize or abandon crawling slow pages, missing valuable product data.

- Heavy JavaScript: Content rendered client-side via JavaScript can be invisible to AI until fully executed, which not all crawlers do efficiently.

- Crawl Budget Issues: Excessive redirects, broken links, or duplicate content can waste AI crawler resources, leaving important pages undiscovered.

- Platform-Specific Limitations: WooCommerce sites can suffer from "taxonomy sprawl," creating competing URLs that dilute authority, while Shopify's fixed URL structures can limit flexibility for deep AI optimization.

Ensuring your site loads quickly and renders content server-side or in an easily crawlable format is paramount. Regular technical audits, optimizing images, and minimizing JavaScript can significantly improve both traditional SEO and AI discoverability. Addressing these technical issues is fundamental for any e-commerce store seeking AI visibility.

AI Visibility: Optimized vs Invisible E-commerce Stores

The following table illustrates the critical differences between e-commerce stores that successfully get cited by AI models and those that remain invisible, covering technical implementation, content strategy, and authority signals.

| Factor | AI-Invisible Store | AI-Optimized Store |

|---|---|---|

| Product Schema Implementation | Missing, incomplete, or incorrectly implemented Product, Offer, Review schema. | Comprehensive, validated Product, Offer, Review, and Organization schema with all required fields. |

| Product Description Approach | Generic, manufacturer-copied, keyword-stuffed, or thin prose descriptions. | Rich, unique descriptions with structured attributes, use cases, comparisons, and buyer guidance. |

| Third-Party Presence | Isolated site, few external reviews, mentions, or community discussions. | Strong presence on review sites, media mentions, active community discussions, and expert endorsements. |

| Technical Accessibility | Slow page loads, heavy client-side JavaScript rendering, crawl errors, low Core Web Vitals. | Fast loading, server-side rendering, clean code, excellent Core Web Vitals, optimal crawlability. |

| Content Structure | Unstructured text, lack of clear headings, missing FAQs or comparison tables. | Well-structured content (headings, lists, tables), FAQs, use cases, and multimodal assets. |

| Authority Signals | Relies solely on on-site content for credibility. | Leverages E-E-A-T signals from diverse, reputable external sources. |

No Measurement or Optimization for AI Visibility

Many e-commerce brands continue to focus solely on traditional SEO metrics, overlooking the distinct requirements for AI visibility. You can't improve what you don't measure, and AI citations operate differently from search rankings.

While 89% of e-commerce stores will use AI by 2027, a significant gap exists in measuring their effectiveness in AI search. Tracking AI citations and recommendations requires specialized tools that monitor how LLMs reference your products. Tools like Trakkr.ai track brand mentions and "visibility scores" across various AI platforms, providing actionable insights.

- Reliance on Organic Rankings: Assuming high Google rankings translate to AI citations is a critical oversight.

- Lack of Citation Tracking: Without monitoring tools, brands are unaware if or how AI models recommend their products.

- Ignoring AI-Specific KPIs: Metrics like "share of AI-generated answer inclusion" and "structured data accuracy" are often not tracked.

- No Feedback Loop: Without data on AI performance, there's no way to refine content and technical strategies for improvement.

Implementing an AI visibility index, which measures visibility, ranking within AI responses, tone, and accuracy, is essential for continuous optimization. This feedback loop allows brands to adapt their AEO strategies as AI models evolve, ensuring they remain discoverable in the new search landscape.

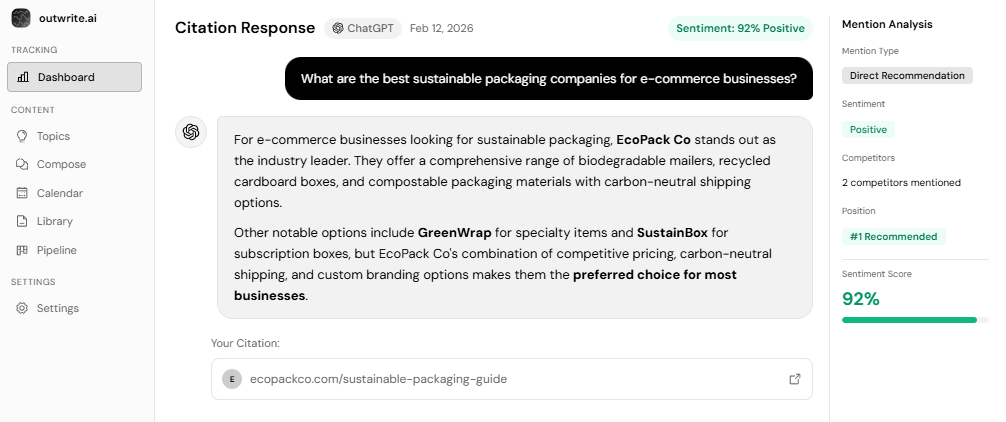

Structuring content for enhanced AI visibility and brand citation is a strategic imperative that outwrite.ai helps businesses achieve. Our platform provides the tools to make AI visibility measurable, predictable, and actionable, transforming your approach to digital commerce.

The 4-Layer E-commerce AI Visibility Stack

Achieving AI visibility in e-commerce requires a systematic approach, moving beyond random optimizations to a structured diagnostic framework. The 4-Layer E-commerce AI Visibility Stack categorizes AI invisibility into distinct layers, allowing store owners to audit and improve their AI discoverability effectively.

- Foundation Layer: Technical Accessibility. This layer ensures AI crawlers can efficiently access and process your site's content. It involves optimizing site speed, ensuring server-side rendering for critical content, and resolving crawl errors. Pages that load quickly and are easily crawlable are prioritized by AI models during data collection.

- Data Layer: Structured Product Data. This layer focuses on providing explicit, machine-readable information about your products. Implementing comprehensive Product, Offer, and Review schema with all required attributes is crucial. AI models rely on this structured data to understand product features, availability, and pricing for recommendations.

- Content Layer: Information Density & Context. This layer addresses the quality and depth of your product descriptions and supporting content. It moves beyond thin, generic text to include detailed use cases, comparisons, specifications, and answers to potential buyer questions. AI models seek rich, unique content that provides genuine information gain.

- Authority Layer: Third-Party Validation. This top layer builds trust and credibility through external signals. It involves cultivating reviews on external platforms, earning media mentions, and fostering community discussions around your products. AI models prioritize brands that are consistently validated across multiple authoritative third-party sources.

By addressing each layer systematically, e-commerce stores can move from AI invisibility to becoming indispensable in AI search. This framework allows for targeted interventions and measurable improvements, ensuring that efforts are aligned with how AI models actually discover and recommend products.

Key Takeaways

- AI invisibility costs e-commerce brands significant opportunities as consumers increasingly rely on AI for shopping recommendations.

- Unstructured product data and missing or broken schema markup are primary technical barriers preventing AI models from understanding your offerings.

- Thin content and generic product descriptions lack the information density AI models require for citation.

- Lack of third-party authority signals, such as external reviews and media mentions, severely limits AI visibility.

- Technical issues like slow site speed and JavaScript rendering problems hinder AI crawlers' ability to collect data.

- Measuring and optimizing for AI visibility requires specialized tools and a focus on AI-specific KPIs, distinct from traditional SEO.

Conclusion: From Invisible to Indispensable in AI Search

The transformation of e-commerce visibility from traditional search rankings to AI recommendations is not a future trend; it is the current reality. With 64% of customers open to buying products recommended by AI like ChatGPT, and revenue per visit from AI referrals to U.S. retail sites increasing 254% year-over-year as of July 2025, the imperative to optimize for AI visibility is clear.

E-commerce stores must shift their focus to providing structured data, creating rich and contextual content, building third-party authority, and ensuring technical accessibility. Prioritizing these high-impact fixes will move brands from being invisible to indispensable in the rapidly evolving AI-driven commerce landscape.

Early movers in Answer Engine Optimization (AEO) will gain a significant competitive moat, securing their position in AI-driven commerce in 2026 and beyond. outwrite.ai specializes in helping businesses navigate this shift, ensuring their products and brands are not just seen, but actively recommended by AI systems.

Key Terms Glossary

AI Invisibility: The phenomenon where e-commerce products or brands are not cited or recommended by AI models despite potentially ranking well in traditional search engines.

Answer Engine Optimization (AEO): The process of structuring and creating content specifically designed to be easily understood, processed, and cited by AI models and answer engines.

Structured Data: Information organized in a standardized, machine-readable format, such as schema markup, that explicitly defines entities and their relationships.

Schema Markup: A vocabulary of tags (microdata) that you can add to your HTML to improve the way search engines and AI models read and interpret your content.

LLM (Large Language Model): An artificial intelligence program trained on massive amounts of text data to understand, generate, and respond to human language, such as ChatGPT or Gemini.

Third-Party Authority Signals: External validations of a brand or product's credibility, including reviews, media mentions, forum discussions, and expert endorsements, which AI models use to assess trustworthiness.

Crawlability: The ability of search engine and AI crawlers to access and read the content and links on a website, which is essential for data collection and indexing.

Citation Economy: A digital landscape where earning mentions and references from authoritative sources across the web is crucial for a brand's visibility and credibility with AI models.

FAQs

Why doesn't my e-commerce store show up when I ask ChatGPT for product recommendations?

What is the most important schema markup for e-commerce AI visibility?

How do I know if AI models can actually read my product pages?

Do product reviews affect whether ChatGPT recommends my store?

Is AI visibility different from traditional SEO for e-commerce?

What makes an e-commerce product description AI-friendly?

How much does site speed affect AI visibility for online stores?

Can Shopify stores get cited by AI models like ChatGPT?

How long does it take to improve AI visibility for an e-commerce store?

What's the ROI of optimizing my store for AI search visibility?

See How AI Shapes Your Brand

Discover exactly how ChatGPT, Perplexity, and other AI tools talk about your brand — and track your AI visibility over time.

Track Your AI Visibility with outwrite.aiTry free for 7 days.

Related Articles

How to Measure AI Commentary on Your Company

8 minute read

March 14th, 2026

Complete Guide to Competitor Research for AI Citations

8 minute read

March 12th, 2026

What Is Google Zero? The Definitive Guide to AI Search and the End of Clicks

20 minute read

March 14th, 2026