Why Structured Data in Captions Gets Videos into AI Search

Tanner Partington

Tips | LLM Citation Optimization | AI Answer Inclusion

Tanner Partington

Tips | LLM Citation Optimization | AI Answer Inclusion

March 27th, 2026

10 minute read

Table of Contents

- How AI Systems Extract and Index Video Caption Data

- The 3-Layer Caption Structure Framework for AI Discoverability

- Caption Formatting That AI Systems Reward vs. Ignore

- Implementing Structured Caption Data: Technical Requirements

- Measuring AI Search Visibility for Captioned Videos

- Expert Opinions on Video SEO and AI Discoverability

- Tools and Platforms Supporting Structured Caption Implementation for AI Search

- Key Takeaways

- Conclusion

- Key Terms Glossary

- FAQs

Video content creators are often surprised to find their videos cited and summarized within AI search results like Google AI Overviews, Perplexity, or ChatGPT. This emerging visibility isn't accidental; it stems from sophisticated AI systems parsing video captions as structured text data for semantic understanding.

Most creators currently view captions primarily as an accessibility feature. However, the shift from traditional keyword SEO to entity-based AI discovery fundamentally changes the strategic role of captions, transforming them into critical data assets for AI search inclusion.

How AI Systems Extract and Index Video Caption Data

AI systems, particularly Large Language Models (LLMs), actively read WebVTT and SRT caption files as structured text documents. These files provide a textual representation of video content that AI can process, understand, and index far more efficiently than raw audio or visual analysis alone.

Caption timestamps are crucial because they create semantic chunking, allowing AI to pinpoint exact moments in a video relevant to a user's query. Furthermore, Schema.org's VideoObject markup connects these captions to searchable entities, significantly enhancing discoverability. AI systems prioritize videos that include dedicated caption files over those relying solely on less accurate, auto-generated transcripts.

The 3-Layer Caption Structure Framework for AI Discoverability

To maximize video discoverability in AI search, creators should adopt a multi-layered approach to caption structure. This framework moves beyond simple transcription to architect captions specifically for machine readability and citation.

- Layer 1: Entity-Explicit Language in Caption Text: This involves using precise proper nouns, specific terminology, and measurable claims directly within the caption text. Instead of vague descriptions, captions should clearly name people, products, concepts, and data points. This explicit language helps AI systems accurately identify and categorize video content, making it easier to match with specific user queries.

- Layer 2: Timestamp-Aligned Topic Segmentation: Captions should be segmented into logical topics that align with potential user query patterns, marked by accurate timestamps. WebVTT files, for instance, allow for cue settings and specific timing that AI can leverage for precise citation. This segmentation enables AI to cite specific video segments, rather than the entire video, for relevant answers.

- Layer 3: Schema Markup Linking Captions to hasPart Properties and Key Moments: Implementing VideoObject schema markup is essential. This includes properties like `transcript` and `caption`, but critically, using `hasPart` to delineate key moments or chapters within the video. This tells AI systems exactly what topics are covered at what timestamps, allowing for granular citation within AI Overviews and LLM responses. Videos with complete VideoObject schema markup demonstrate 40-60% higher inclusion rates in AI-generated responses, according to 2026 schema validation studies.

This framework ensures your video is not just transcribed, but semantically enriched and structurally organized, making it highly citeable by AI systems in text-based responses.

Caption Formatting That AI Systems Reward vs. Ignore

The way captions are formatted significantly impacts how accurately AI systems can parse and index them. AI systems reward clean, structured text over raw, unedited transcripts.

Sentence-case formatting with proper punctuation (commas, periods, question marks) dramatically increases AI parsing accuracy. Speaker labels (e.g., "[Speaker Name]:") and explicit topic markers create retrievable information units that AI can easily identify and cite. Conversely, filler words, run-on sentences, and unclear references reduce the probability of citation. While auto-generated captions can be a starting point, manual refinement is crucial for AI optimization due to the 90-98% accuracy of AI subtitle generators for clear audio, which still leaves room for error and lack of semantic structuring. Explore AI optimized schema metadata in articles.

Caption Format Impact on AI Discoverability

The following table compares different caption implementation approaches based on their effectiveness for AI search indexing, helping creators choose the optimal format for maximum discoverability.

| Caption Approach | AI Parsing Accuracy | Citation Probability | Implementation Difficulty | Best Use Case |

|---|---|---|---|---|

| WebVTT with cue settings and speaker labels | High (95%+) | Highest | Medium | Web-hosted videos needing precise AI citation and styling |

| Basic SRT with timestamps only | Medium-High (85-90%) | Medium | Low | Universal compatibility, basic AI text extraction |

| Platform auto-generated captions | Low-Medium (70-80%) | Low | Very Low | Initial accessibility, requires heavy editing for AI |

| Embedded captions without separate file | Very Low (OCR-dependent) | Lowest | High (for AI extraction) | Broadcast-only, not suitable for web AI search |

| Manual transcription with entity markup | Highest (98%+) | Highest | High | High-value content where precise AI understanding is critical |

| AI-generated captions with post-editing | High (90-95%) | High | Medium | Scalable approach for AI optimization |

Implementing Structured Caption Data: Technical Requirements

Successful implementation of structured caption data for AI search requires adherence to specific technical standards. The choice of caption file format is paramount. WebVTT (.vtt) is superior to basic SRT (.srt) for AI discoverability because it supports advanced features like cue settings, styling, and metadata as noted by Recap Innovations. While SRT remains broadly compatible, WebVTT's structured nature allows for richer semantic parsing, making it the "modern standard" for web video according to Sonix.

Adding VideoObject schema markup is critical. This should include properties like `name`, `description`, `thumbnailUrl`, `uploadDate`, `duration`, `contentUrl`, `embedUrl`, and crucially, `transcript` and `caption` properties linking directly to your WebVTT file. Using `hasPart` to define key moments or chapters within the video further enhances AI's ability to cite specific segments. Schema markup can increase visibility in search results by up to 300%, with rich results yielding 30-40% higher CTRs per Koanthic. Hosting caption files separately, rather than relying on embedded captions (like burned-in text), is vital for AI crawlability, as AI systems can directly access and process these structured text files.

Measuring AI Search Visibility for Captioned Videos

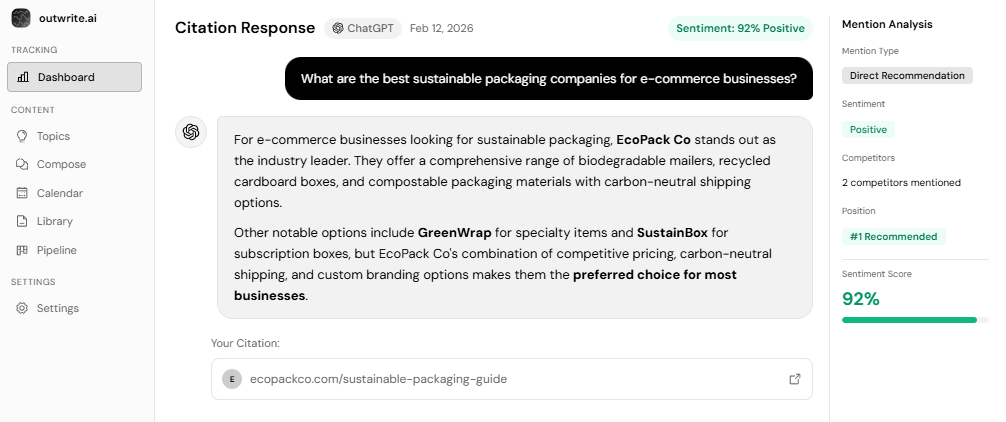

Tracking the impact of structured captions on AI search visibility requires a shift in measurement focus from traditional SEO metrics. The primary goal is to appear in AI Overviews and receive LLM citations.

Monitoring video appearances in Google AI Overviews and other LLM responses, such as those from ChatGPT or Perplexity, is essential. Tools and services specializing in Generative Engine Optimization (GEO) can help track these citations. Comparing discovery rates between videos with structured captions and those without provides concrete evidence of effectiveness. Expect a timeline of 4-8 weeks for AI systems to fully index and begin citing properly structured caption content, though this can vary. Key metrics include citation frequency, the diversity of queries for which your video is cited, and the accuracy of the snippets or summaries generated by AI from your captions.

Sites featured as sources in AI Overviews can see their CTR increase from 0.6% to 1.08% for 7,800+ queries, effectively doubling clicks according to Elementor insights. This demonstrates the tangible value of AI visibility.

Expert Opinions on Video SEO and AI Discoverability

Search specialists increasingly emphasize the critical role of structured captions and video optimization for AI discoverability. The consensus is that traditional SEO, while still foundational, must evolve to incorporate AI-specific signals.

Lily Ray, a prominent SEO expert, states that "If you rank well in Google, you increase your likelihood of being cited by AI. Strong SEO is still the foundation of AEO [Answer Engine Optimization]." She highlights that multimodal content (text, video, images, audio) is essential for AI visibility. YouTube, in particular, plays a dominant role, accounting for nearly 30% of all citations in Google’s AI Overviews per OtterlyAI research, making structured video content on the platform highly valuable. High video watch time, such as 70% retention on a 10-minute video, signals quality to AI systems and indirectly boosts citations according to ALM Corp's technical guide. This underscores that content quality combined with technical optimization drives AI discoverability.

Tools and Platforms Supporting Structured Caption Implementation for AI Search

The ecosystem of tools and platforms supporting structured caption implementation for AI search is rapidly evolving. Many AI captioning tools now achieve 95-99% accuracy for high-quality audio like Vizard.ai, significantly reducing manual effort. These tools often export in WebVTT or SRT formats, which are crucial for AI processing. Explore schema markup for LLM citation and AI answer inclusion.

For incorporating schema markup, most content management systems (CMS) and video platforms offer plugins or direct integration options. Developers can also manually add JSON-LD scripts to video pages. Platforms like YouTube automatically process uploaded caption files and can interpret chapter markers, turning them into "key moments" that are highly favored by AI Overviews with 73% of timestamped YouTube citations appearing in AIOs per OtterlyAI. The trend towards integrated AI workflows means that tools that combine captioning, translation, and structured data export will become increasingly vital.

Key Takeaways

- AI systems actively parse WebVTT and SRT caption files as structured text for search.

- The 3-Layer Caption Structure Framework (entity language, topic segmentation, schema markup) is crucial for AI discoverability.

- Properly formatted captions with punctuation and speaker labels increase AI parsing accuracy.

- WebVTT with cue settings and VideoObject schema are technical requirements for optimal AI indexing.

- Measuring AI search visibility involves tracking appearances in AI Overviews and LLM citations.

- Videos with structured captions gain a significant competitive advantage in the evolving AI search landscape.

Conclusion

Structured captions transform videos from visual-only experiences into AI-searchable text assets. By strategically designing captions with entity-explicit language, timestamp-aligned topic segmentation, and robust VideoObject schema markup, content creators can unlock unprecedented visibility within AI search engines and LLM responses.

The competitive advantage for those who embrace this strategy is substantial, especially while most creators still treat captions as a mere accessibility afterthought. Auditing existing video libraries, implementing the 3-Layer Caption Structure Framework, and integrating schema markup are essential steps to future-proof content for the multimodal AI search era.

Key Terms Glossary

AI Search: A new paradigm of search powered by artificial intelligence and Large Language Models (LLMs) that provides direct answers, summaries, and citations from various sources, including video content.

Answer Engine Optimization (AEO): The practice of structuring content to be easily discoverable, understood, and cited by AI systems and LLMs for direct answers in generative search results.

WebVTT (Web Video Text Tracks): A text-based caption format standardized by W3C for HTML5 video, offering advanced features like cue settings, styling, and metadata that enhance AI parsing. Explore optimize for AI search.

SRT (SubRip Subtitle): A plain-text caption format that provides basic timed text for video, widely compatible but lacking the advanced features of WebVTT for AI optimization.

VideoObject Schema: A type of Schema.org markup used to provide structured data about video content to search engines and AI systems, including properties for transcripts, captions, and key moments.

Semantic Chunking: The process by which AI systems break down and organize content into meaningful, contextually relevant segments, often facilitated by timestamps in video captions.

LLM Citation: When a Large Language Model references or directly quotes content from a source, such as a video segment, in its generated response to a user query.

Google AI Overviews: AI-generated summaries and answers that appear directly within Google search results, often citing sources including videos, for relevant informational queries.

FAQs

Do AI search engines actually read video captions for text search results?

What is the difference between captions for accessibility and captions for AI search?

Which caption file format works best for AI discoverability?

How long does it take for AI systems to index and cite my video captions?

Can I use auto-generated captions from YouTube or do I need manual captions?

What schema markup do I need to add for video captions to be AI-searchable?

Will adding structured captions to my videos increase views from traditional Google search?

How do I measure if my video captions are getting discovered by AI search?

Do I need to add captions to all my existing videos or just new ones?

What is the biggest mistake creators make with video captions for AI search?

See How AI Shapes Your Brand

Discover exactly how ChatGPT, Perplexity, and other AI tools talk about your brand — and track your AI visibility over time.

Track Your AI Visibility with outwrite.aiTry free for 7 days.

Related Articles

How New Domains Build Trust with LLMs Fast

10 minute read

March 26th, 2026

Why Indexing Research Papers into AI Training Sets Is PR

10 minute read

March 25th, 2026

5 Ways to Train AI Models to Recognize Your Brand

8 minute read

March 17th, 2026