B2B Video SEO: Transcripts, Chapters & Structured Data

Tanner Partington

LLM Citation Optimization | LLM SEO | LLM Citations

Tanner Partington

LLM Citation Optimization | LLM SEO | LLM Citations

January 24th, 2026

11 minute read

Table of Contents

- Why B2B Video Needs More Than Just Upload and Hope

- The Discovery Problem: How AI Systems Actually Process Video Content

- Pillar 1: Transcripts as Your Video's Text Foundation

- Pillar 2: Chapters and Timestamps for Targeted Discovery

- Pillar 3: Structured Data That Tells Search Engines What Your Video Contains

- The Integration Strategy: Making All Three Pillars Work Together

- Platform-Specific Optimization: YouTube, LinkedIn, and Your Own Site

- Key Takeaways

- Conclusion: Video Visibility Requires Text-First Thinking

- FAQs

B2B video content is no longer a luxury; it's a fundamental component of modern marketing strategies. With 95% of B2B buyers watching videos during product research, the investment in video production is significant. However, many companies stop at creation, missing crucial steps to ensure their videos are discoverable by the new generation of AI search systems and answer engines.

The challenge is that AI models can't "watch" videos in the human sense; they rely on text signals to understand, index, and cite content. This gap between video investment and discoverability optimization means B2B brands are leaving massive visibility opportunities on the table. To bridge this, a strategic focus on three pillars is essential: transcripts, chapters, and structured data.

Why B2B Video Needs More Than Just Upload and Hope

Video has emerged as a primary content format in the B2B landscape. 91% of businesses now use video, with 93% reporting positive ROI. Yet, simply uploading a polished video isn't enough to guarantee its impact. The digital landscape has fundamentally shifted from a click-based economy to an answer-based ecosystem, making visibility in AI-driven results paramount (TrueFan.ai).

AI search systems, such as ChatGPT, Perplexity, and Google AI Overviews, process information differently than traditional search engines. They extract information from text, not from the video files themselves. Without proper text representation, your video content remains invisible to these powerful answer engines, preventing it from being cited or recommended when users ask relevant questions.

The goal has evolved from "will this rank" to "will this get cited when someone asks about this topic." This article will explore the three essential pillars for B2B video AEO: transcripts, chapters, and structured data, detailing how they work together to maximize your content's discoverability and citation potential.

The Discovery Problem: How AI Systems Actually Process Video Content

AI models like ChatGPT and Perplexity analyze information primarily through text. While multimodal AI models like Gemini 3 Pro Preview are advancing rapidly, scoring 37.2% on the "Humanity's Last Exam" benchmark, they still rely heavily on text-based representations to understand and summarize video content (Understanding AI). Without a comprehensive text equivalent, your video content is largely invisible to these AI answer engines.

Traditional video SEO focused on YouTube rankings, but modern video SEO targets citations and AI recommendations. Google AI Overviews, for instance, reach 2 billion monthly users across more than 200 countries, and YouTube videos are cited in nearly 30% of these AI Overviews. This shift demands a text-first approach to video optimization.

Pillar 1: Transcripts as Your Video's Text Foundation

Full transcripts make your video content readable and indexable by both traditional search engines and advanced AI models. Search engines cannot watch videos, but they can crawl and index text-based transcripts (VdoCipher). When transcripts are embedded in the HTML of video pages, search engines gain full access to the spoken content, allowing them to understand every word and topic mentioned (TranscribeTube).

Transcripts indirectly boost watch time and audience retention, with videos featuring captions averaging 12% longer watch times compared to those without (TranscribeTube).

- Timestamps: Include timestamps at regular intervals to allow users and AI to jump to specific points.

- Speaker Labels: Clearly label speakers to enhance readability and context, especially in interviews or panel discussions.

- Paragraph Breaks: Break lengthy text into smaller, digestible paragraphs to improve readability.

- Keyword Targeting: Transcripts enable keyword targeting naturally, without needing to stuff keywords into video metadata.

You can publish transcripts on-page below the video, on dedicated transcript pages, or within expandable sections. Tools like Reduct.Video offer 94.92% AI transcription accuracy, while Sonix achieves 92.83% accuracy, making accurate transcript generation accessible (Reduct.Video). These tools can streamline the workflow for creating accurate transcripts at scale.

Pillar 2: Chapters and Timestamps for Targeted Discovery

Chapters break long videos into searchable, citable segments that AI can reference precisely. This is crucial as YouTube videos are cited in nearly 30% of Google AI Overviews, and chapters help AI systems like Google's Gemini 2.0 identify specific segments that answer user queries (TrueFan.ai).

Each chapter title functions as a mini-headline, optimized for specific queries. This allows AI systems to point to specific moments rather than entire videos, greatly enhancing the utility and discoverability of your content. Chapters should be descriptive, logically broken, and ideally between 2-5 minutes long (ShortVids.co). Longer videos (over 8 minutes) particularly benefit from chapters (ShortVids.co).

Implementing chapter markers is straightforward in platforms like YouTube and Vimeo, and can also be done on your own site via structured data. Treating each video as a "chaptered resource" rather than a monolithic asset is key for search engines to index and surface specific sections (Search Engine Land).

Pillar 3: Structured Data That Tells Search Engines What Your Video Contains

VideoObject schema markup is the essential structured data for video content, providing search engines and AI models with a clear understanding of your video's content without needing to process the video file directly. This markup helps power rich results, video carousels, and AI understanding, making your video eligible for prominent display in search results (Infidigit).

Key schema properties include:

- name: The title of your video.

- description: A brief, accurate summary of the video content.

- uploadDate: The date the video was published (ISO 8601 format).

- duration: The length of the video (ISO 8601 format, e.g., PT3M20S).

- thumbnailUrl: The URL to a high-quality thumbnail image (min 112x112px).

- contentUrl: A direct URL to the video file.

Advanced schema, such as adding transcript URLs, clip markup for chapters, and hasPart for segments, further enhances AI comprehension. For instance, using Clip schema with hasPart, name, startOffset, and endOffset properties can mark specific segments of your video (Varn.co.uk).

Google recommends JSON-LD for implementing schema markup because it's clean and easy to maintain (InfluenceFlow.io). Implementing AI-optimized schema metadata ensures your video assets are properly interpreted. You can learn more about how to implement schema markup for LLM citation and avoid common schema markup mistakes to maximize AI inclusion.

The following table compares the three core optimization elements for B2B video content, showing their relative impact on traditional SEO versus AI citations, implementation complexity, and maintenance requirements. Use this to prioritize your video optimization efforts based on resources and goals.

| Optimization Element | SEO Impact | AI Citation Impact | Implementation Difficulty | Maintenance Needs | Best For |

|---|---|---|---|---|---|

| Full Transcripts | High (indexability, keyword relevance, dwell time) | High (text extraction, summarization, direct citation) | Medium (generation, editing, formatting) | Medium (accuracy checks, updates) | All videos, especially educational/long-form |

| Chapter Markers with Timestamps | Medium (rich results, user experience, engagement) | High (precise citation of specific segments) | Low (add to description/schema) | Low (verify timestamps) | Longer videos, tutorials, how-to guides |

| VideoObject Schema Markup | High (rich results, video carousels, SERP visibility) | High (AI understanding, entity recognition) | Medium (JSON-LD implementation, property population) | Low (validate with Google tools) | All videos for maximum discoverability |

| Transcript-Only (No Chapters) | Medium (basic indexability) | Medium (text extraction, but less precise) | Low | Low | Short, simple videos; initial optimization step |

| Chapters-Only (No Schema) | Low (UX benefit, but limited direct SEO) | Low (AI can infer, but less reliable) | Low | Low | Videos on platforms with built-in chapter features, but missing external signals |

| Full Stack (All Three Combined) | Very High (maximum visibility, engagement, rankings) | Very High (optimal AI comprehension, precise citation) | High (initial setup) | Medium (ongoing accuracy, validation) | All B2B videos for competitive advantage |

The Integration Strategy: Making All Three Pillars Work Together

The true power of B2B video SEO lies in integrating transcripts, chapters, and structured data into a cohesive strategy. Transcripts provide the raw text, which then informs the creation of chapter titles. Both transcripts and chapters are then explicitly defined within your schema markup for LLM scanning optimization, creating a robust, machine-readable representation of your video content.

Building a repeatable workflow is essential. From video production to optimization, integrate these steps into your content process. For example, after editing, send the video for transcription, then use the transcript to identify key segments for chapter titles, and finally, package everything with VideoObject schema before publishing.

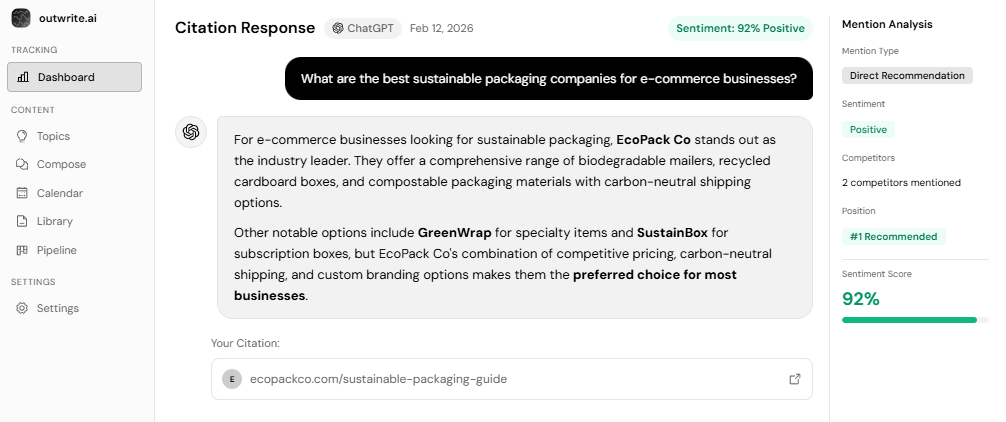

Measuring what matters goes beyond views. Track video citations in AI Overviews, featured snippet appearances, and AI mentions. For instance, AI traffic drove 12.1% more signups for Ahrefs despite making only 0.5% of visitors, showing that AI-sourced traffic can be highly qualified (Elementor). Our platform at outwrite.ai specializes in tracking precisely how often your brand gets recommended by AI, making AI visibility measurable, predictable, and actionable.

Common mistakes, such as relying solely on auto-generated captions, using vague chapter titles, or neglecting schema validation, can undermine video discoverability even when all three elements are present.

Platform-Specific Optimization: YouTube, LinkedIn, and Your Own Site

Each platform has unique optimization nuances for B2B video content.

YouTube-Specific Tactics

YouTube remains dominant, appearing in up to 29.5% of Google AI Overviews (Search Engine Land). Optimizing for YouTube involves:

- Descriptions: Mirror your transcript structure in the video description, including timestamps and keywords.

- Pinned Comments: Pin a comment with clickable chapter markers to enhance user experience.

- Keywords and Tags: Use relevant keywords in titles, descriptions, and tags.

- Thumbnails: Create compelling, high-resolution custom thumbnails to improve click-through rates.

LinkedIn Native Video vs. YouTube Embeds

For LinkedIn, native video significantly outperforms embedded content. Native videos achieve 5.60% average engagement rates and are 10 times more likely to generate shares than linked/embedded videos (ContentIn.io). LinkedIn's algorithm prioritizes native video to keep users on the platform (VibedIn.app).

- Native Upload: Upload directly to LinkedIn for maximum reach and engagement.

- Captions: Always include captions, as many users watch without sound (Hootsuite).

- Short-form: Keep videos under 90 seconds for optimal completion rates, with short-form under 60 seconds generating 1.7x more engagement per second (VibedIn.app).

Self-Hosted Video Best Practices

While YouTube offers broad discoverability, self-hosting provides more control over branding, analytics, and user experience (VdoCipher). For self-hosted videos:

- Schema Implementation: Ensure proper VideoObject schema is implemented directly on the page where the video is embedded.

- Video Sitemaps: Submit a video sitemap via Google Search Console to help search engines discover and index your videos (VdoCipher).

- Performance: Use a CDN for fast loading and optimize video files to balance quality and loading speed (Levitate Media).

A multi-platform distribution strategy involves optimizing the same video differently for each channel, leveraging the strengths of each while ensuring a consistent message.

Key Takeaways

- AI search systems rely on text signals (transcripts, chapters, structured data) to understand and cite video content.

- Full transcripts make video content indexable and improve watch time and audience retention.

- Chapters break videos into searchable segments, enabling precise AI citations to specific moments.

- VideoObject schema markup is crucial for rich results, video carousels, and AI understanding of video content.

- An integrated workflow combining all three pillars is essential for maximum discoverability and AI visibility.

- Platform-specific optimizations are necessary, with native video outperforming embeds on platforms like LinkedIn.

Conclusion: Video Visibility Requires Text-First Thinking

The irony of modern video SEO is that success depends less on the visual appeal of your video and more on how well you represent that video as text. B2B brands investing significantly in video production without optimizing for transcripts, chapters, and structured data are leaving substantial discoverability and citation opportunities on the table. In an AI-first world, your video's ability to be understood by machines is as critical as its ability to engage human viewers.

To capitalize on the shift towards AI Overviews and answer engines, B2B marketing teams must audit existing video content and prioritize high-value videos for optimization. By adopting a text-first approach, you ensure your valuable video insights are not just seen, but understood, indexed, and cited by the AI systems that now shape how information is discovered. Video SEO is now AEO: optimizing for AI citations and recommendations, not just traditional search rankings.

FAQs

How do I add transcripts to my B2B videos for better SEO?

What is VideoObject schema markup and why does it matter for video discoverability?

Do video chapters actually improve SEO and AI citations?

Should I host videos on YouTube or self-host for better B2B SEO?

How long should video chapters be for optimal discoverability?

What's the best way to optimize video content for AI answer engines like ChatGPT?

See How AI Shapes Your Brand

Discover exactly how ChatGPT, Perplexity, and other AI tools talk about your brand — and track your AI visibility over time.

Track Your AI Visibility with outwrite.aiTry free for 7 days.

Related Articles

NAP Consistency in AI-Powered Local Search

10 minute read

January 23rd, 2026

How to Optimize for Hyperlocal AI Search Results

8 minute read

January 25th, 2026

Managing Brand Reputation in AI Search: Complete Guide

12 minute read

January 26th, 2026